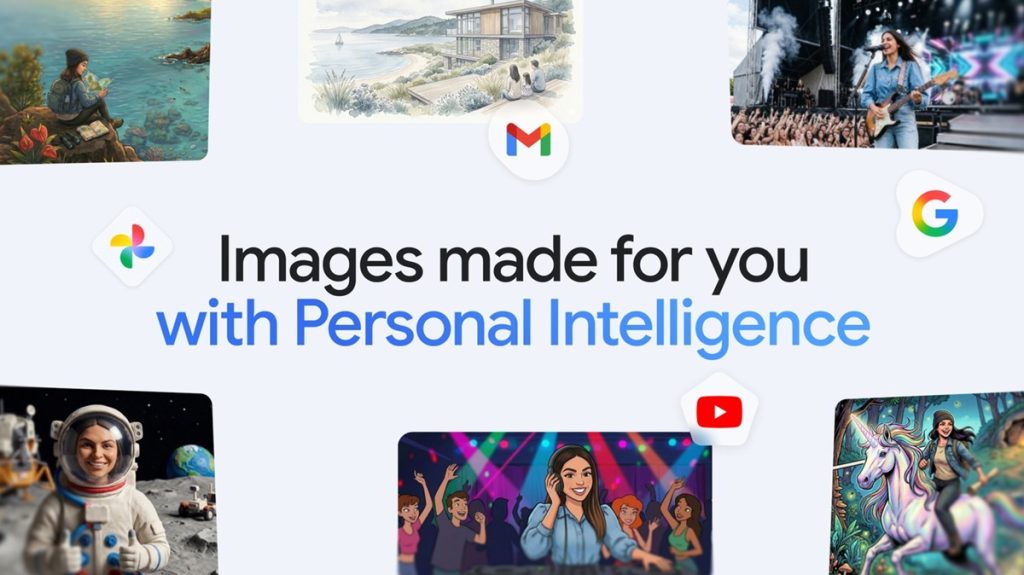

Google is rolling out a new Personal Intelligence feature in the Gemini app that makes AI image generation more context-aware and personalized. The update combines Nano Banana 2 with Google Photos integration so users can create images based on their lifestyle, preferences, and real-world memories without needing long prompts or manual uploads.

Personalized images in the Gemini app

Gemini is shifting from prompt-heavy generation to context-driven creation. Once users connect their Google apps, the system automatically uses available context to understand intent from simple prompts like “Design my dream house.”

Instead of requiring detailed instructions or reference images, Gemini fills in missing details using signals from connected services. This makes image generation faster and more aligned with the user’s personal style, interests, and past activity, while reducing the effort needed to describe ideas.

Key features

- Powered by Nano Banana 2 image generation model

- Uses Personal Intelligence for context-aware outputs

- Pulls user context from connected Google apps and services

- Works with simple prompts, no long descriptions needed

- Integrates Google Photos for real-life visual grounding

- Uses labeled people and pets for identity-based generation

- Generates images featuring users, family, and close contacts

- Supports multiple artistic styles (watercolor, sketch, claymation, oil painting)

- Allows iterative refinement through feedback and regeneration

- “+” option to switch reference images from Google Photos

- Source view shows which image was used and how it influenced output

Starring you and your loved ones

With Google Photos integration, Gemini can recognize and use labeled people and pets to include real individuals in generated images. This allows the system to build scenes featuring the user’s personal circle in both realistic and stylized formats.

Users can turn real-life moments into creative outputs or reimagine them in different artistic styles without uploading any files or manually selecting reference images, as the system already understands relationships through existing photo labels.

Creative control and transparency

Users can refine generated results if they are not accurate or aligned with expectations. They can provide feedback and regenerate images with improved instructions.

A built-in “+” option allows switching reference images from Google Photos to influence different outcomes. The Sources view shows which photo was selected for generation and explains how it contributed to the final result, giving users visibility into the process.

Privacy and data usage

Google states that private Google Photos libraries are not used directly to train Gemini models. Instead, model improvements rely on limited interaction data such as prompts and generated responses.

The feature is fully opt-in, and users can manage or disconnect connected Google apps anytime through settings, ensuring full control over personal data usage.

Availability

The feature is rolling out over the coming days to Google AI Plus, Pro, and Ultra subscribers in the United States. Google also plans to expand it to Gemini on Chrome desktop and more users in future updates.