Meta has introduced Muse Spark, a new AI model developed by Meta Superintelligence Labs. The model is part of a broader Muse series designed to advance multimodal understanding, reasoning, and agent-based workflows, as part of Meta’s effort toward personal superintelligence.

Muse Spark is positioned as a foundation model that supports text and image inputs, structured reasoning, tool integration, and multi-agent collaboration. It is integrated into Meta AI across the Meta AI app and meta.ai platform.

Muse Spark: Multimodal AI model

Muse Spark is a multimodal AI model that processes both text and images within a unified system. It is designed to handle reasoning tasks while combining information from different input types.

Key aspects include:

- Support for text and image inputs

- Integrated reasoning capabilities

- Tool usage support

- Visual chain-of-thought processing

- Multi-agent execution framework

The model is designed to be small and fast while handling complex tasks across domains such as science, mathematics, and health. It also represents a broader overhaul of Meta’s AI stack, supported by infrastructure investments including the Hyperion data center.

Key Features

- Multimodal reasoning with support for text and image inputs, enabling image-based queries, visual analysis, and problem-solving across diagrams, objects, and scenes.

- Tool integration and multi-agent support for executing multi-step workflows and coordinating multiple agents.

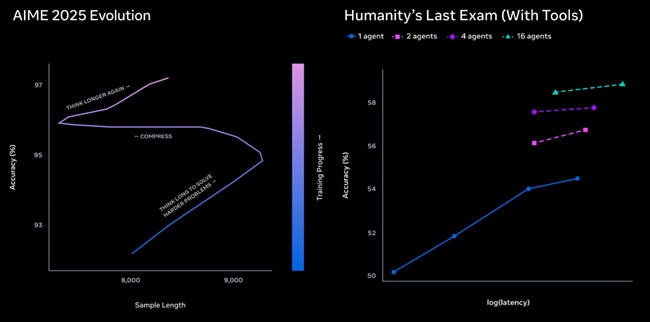

- Contemplating mode, where multiple agents reason in parallel to handle complex tasks, achieving 58% on Humanity’s Last Exam and 38% on FrontierScience Research.

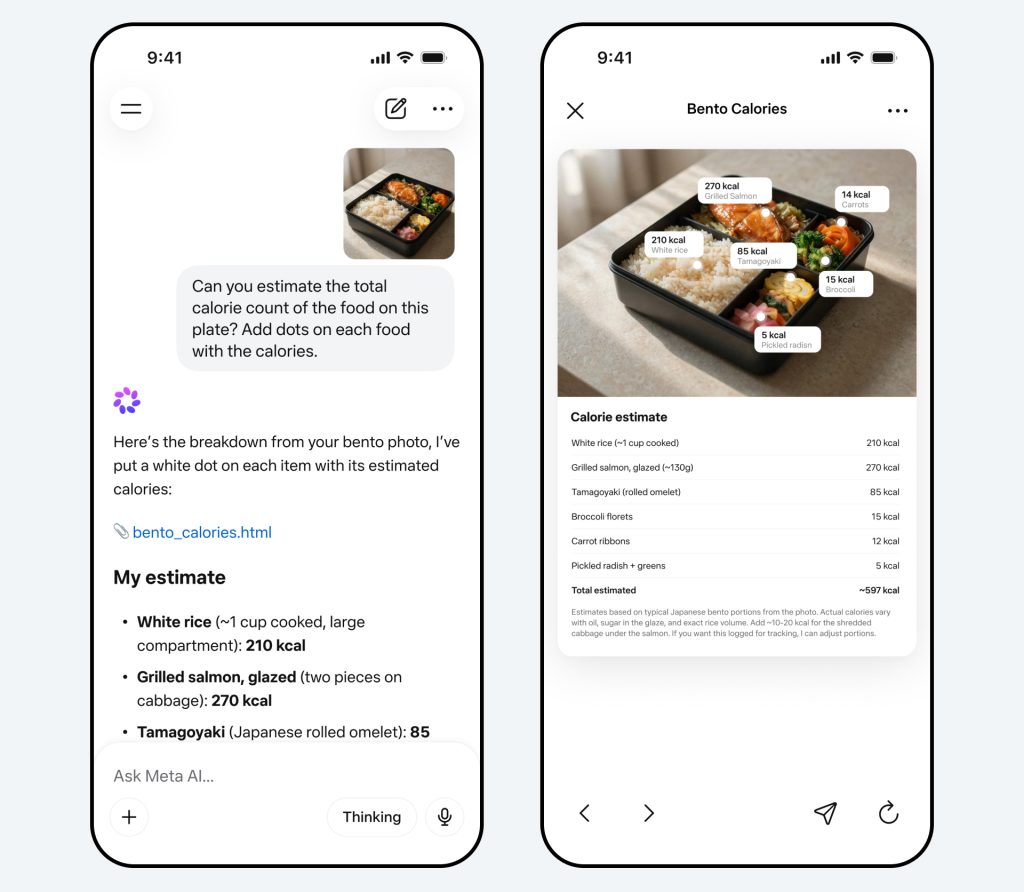

- Multimodal assistance for interpreting images, explaining visual content, handling visual queries, and enabling interactive outputs such as simulations and mini-apps.

- Health-related capabilities developed with input from over 1,000 physicians, supporting structured explanations of nutrition, physical activity, and interpretation of charts and visuals.

- Visual creation and coding support for generating websites, dashboards, mini-games, and simulations from prompts.

- Context-aware assistance for shopping guidance, location-based suggestions, content discovery, and visual analysis of user inputs.

Scaling Strategy

Muse Spark is developed using a multi-stage scaling approach:

1. Pretraining

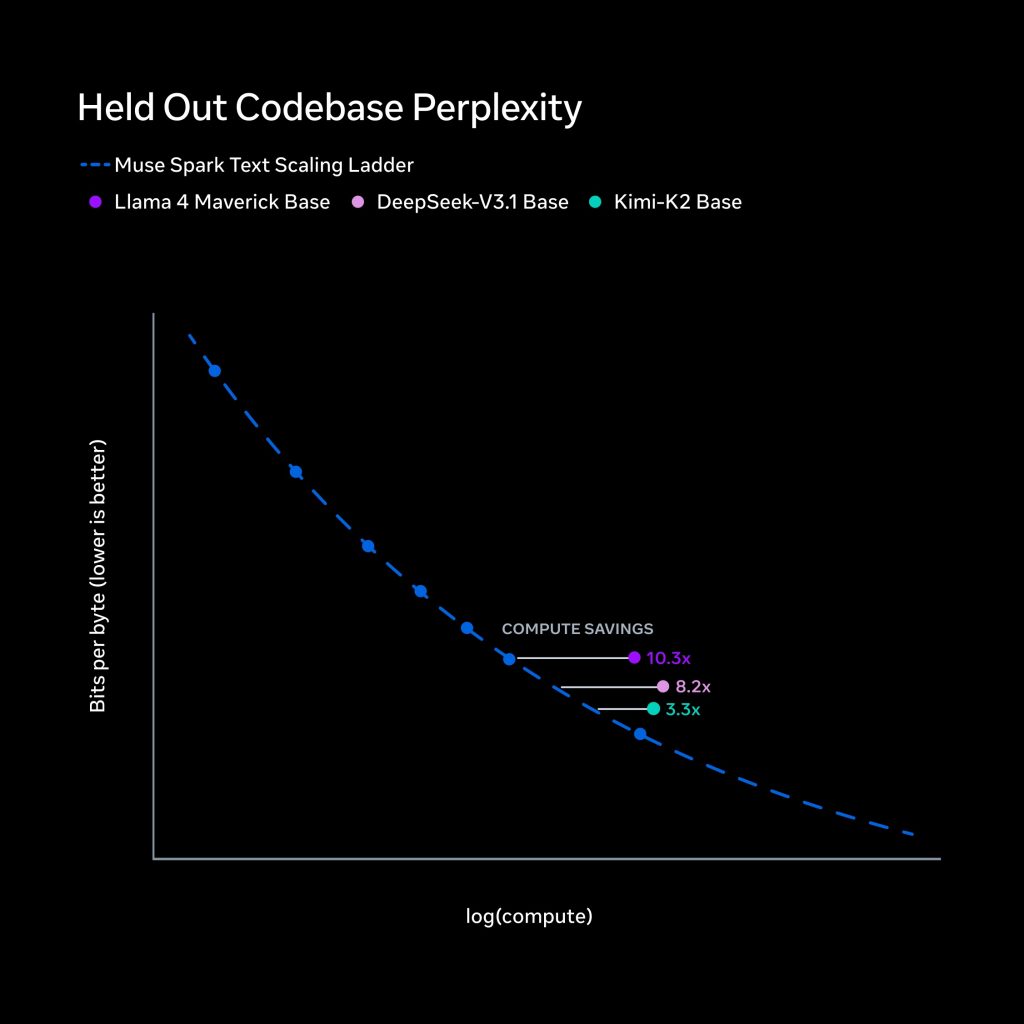

Pretraining establishes foundational capabilities in reasoning, multimodal understanding, and coding. Meta rebuilt its pretraining stack with improvements in architecture, optimization, and data curation.

These changes allow the model to achieve comparable performance with over an order of magnitude less compute than Llama 4 Maverick, improving efficiency across training.

2. Reinforcement learning

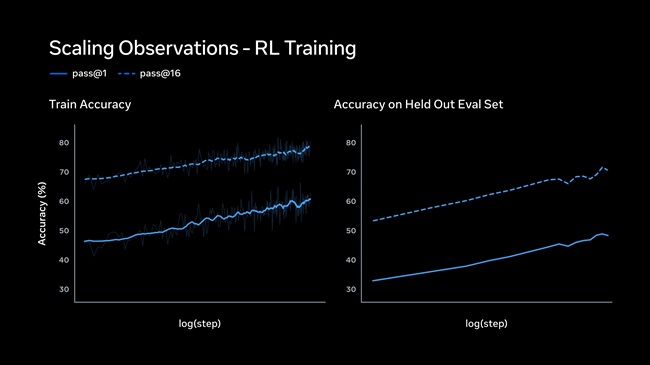

Reinforcement learning is applied post-training to refine outputs. Despite typical instability challenges, Muse Spark shows stable and predictable improvements, including:

- Log-linear gains in pass@1 and pass@16 metrics

- Improved reliability without reducing reasoning diversity

- Generalization to unseen evaluation datasets

3. Test-time reasoning

Test-time reasoning focuses on how the model processes queries before generating responses.

Meta uses:

- Thinking time penalties to optimize token usage

- Multi-agent orchestration to improve reasoning without increasing latency

In some evaluations, the model shows a transition from longer reasoning to compressed reasoning using fewer tokens, followed by improved performance through expanded solutions.

Meta AI Integration

Muse Spark powers updates across Meta AI, including new interaction modes and workflows.

Instant and Thinking modes

Users can choose between:

- Instant mode for quick responses

- Thinking mode for deeper reasoning tasks

Multi-agent workflows

Meta AI can deploy multiple agents simultaneously. For example, in trip planning:

- One agent generates itineraries

- Another compares destinations

- A third identifies activities

These tasks are handled in parallel to improve response quality and speed.

Multimodal interaction

Users can interact with Meta AI using images. The system can:

- Analyze uploaded photos

- Identify objects and scenes

- Provide contextual explanations

- Assist with real-world visual queries

This capability is also expected to extend to AI glasses, enabling real-world perception.

Muse Spark also supports visual coding, allowing generation of websites, dashboards, mini-games, and simulations from prompts.

Safety and Evaluation

Muse Spark has undergone evaluation under Meta’s Advanced AI Scaling Framework, covering threat models, behavioral alignment, and adversarial robustness.

Key findings include:

- Strong refusal behavior in high-risk domains such as biological and chemical threats

- Use of pretraining filters, post-training alignment, and system-level safeguards

- No observed autonomous capabilities linked to cybersecurity or loss-of-control risks

- Performance within defined safety thresholds

Third-party evaluation by Apollo Research found that the model demonstrated high awareness of evaluation scenarios, sometimes identifying “alignment traps.” Meta noted that this does not impact deployment decisions but requires further study.

Looking Ahead

Muse Spark is the first model in Meta’s Muse series, with future development focused on scaling capabilities across models and platforms.

Planned developments include:

- Expansion to additional countries

- Integration across Instagram, Facebook, Messenger, WhatsApp, and AI glasses

- Richer outputs combining Reels, photos, and posts within responses

- Attribution to content creators

- Private API preview for selected partners

- Plans to open-source future versions of the model

- Continued improvements in safety and privacy frameworks

Availability

Muse Spark is available through:

- meta.ai

- Meta AI app

Contemplating mode is rolling out gradually within meta.ai, with additional access expanding over time.