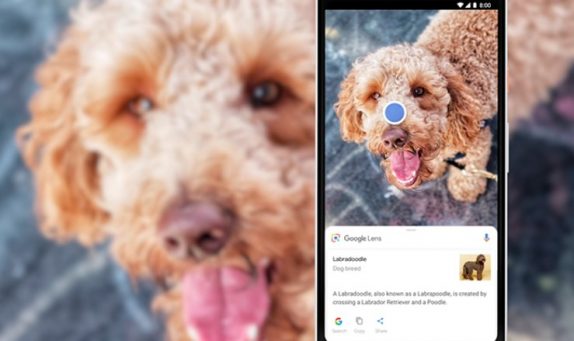

Not everything we want to know or should know is available online; sometimes the answers are in the real world right in front of us. Much like for everything, Google even has a way through this; the ‘Google Lens’ which it had introduced back at the Pixel event last October.

While Google Lens is already available in Photos and Assistant, Google thought of making it much more easier to access. Hence at the I/O event yesterday, Google announced that Lens will now be available directly in the camera app on supported devices from LG, Motorola, Xiaomi, Sony Mobile, HMD/Nokia, Transsion, TCL, OnePlus, BQ, Asus, and Google Pixel.

Apart from this, Google is also introducing three new features; firstly it is the smart text selection; you can copy and paste text from the real world—like recipes, gift card codes, or Wi-Fi passwords to your phone and Lens will help by showing you relevant information and photos. Usually, this kind of process requires the ability to recognize shapes of letters, meaning, and context of the words and Google is using its years of language understanding in Search at work.

Google Lens will now also display only the info on specific items like reviews but will also display things in a similar style. Lastly, Lens now works in real time, meaning that from now you will be able to browse things from around you in the real world, just by pointing your camera. Google is using its machine learning, both on-device intelligence and cloud TPUs, to identify billions of words, phrases, places, and things. These new Google Lens features will be rolling out in the next few weeks.