Facebook today has published ‘internal enforcement guidelines to better help users in understanding where it draws the line on nuanced issues. It is also hoping that publishing ‘internal enforcement guidelines will help experts in different fields to give feedback so that the company can improve the standards.

Facebook currently has 11 offices around the world where the content policy team is help responsible for developing the Community Standards. This also includes subject matter experts on issues such as hate speech, child safety, and terrorism. Facebook says that it is aiming to make users know that they are safe to connect with people, have conversations, and express their opinions freely. To do so, identifying the potential violations of its standards is the primary goal and it is using the review system.

For its review system, Facebook is using the combination of artificial intelligence and reports from people to identify posts, pictures or other content that likely violates the community standards. Right now Facebook has more than 7,500 content reviewers, over 40% more than the number at this time last year.

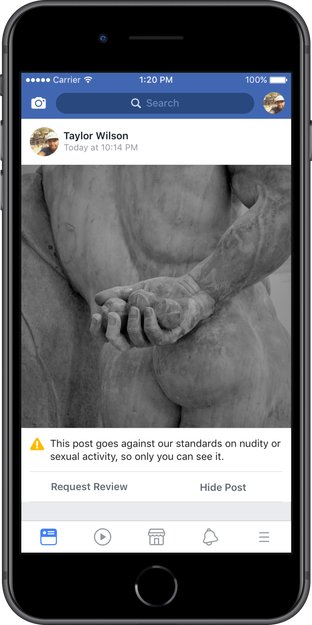

Another challenge in this is identifying and accurately applying the policies to the content that has been flagged. In any case, Facebook makes a mistake; it is also bringing an appeal system over the coming year. It is building the ability for users to appeal our decisions. As a part of this initiative, it is launching appeals for posts that were removed for nudity / sexual activity, hate speech or graphic violence.

It also said that it is working on expanding this process further, by supporting more violation types, giving users the opportunity to provide more context and rectify the mistakes.